# Concepts (/docs/concepts)

The event log is the source of truth. The runtime, the inspector, and

replay all read the same shape. Every other design choice falls out of

that.

## The event log [#the-event-log]

Every run is an append-only sequence of events:

```text

seq=1 RunStarted (model, tools, system prompt, params hash)

seq=2 TurnStarted (turn id, prompt hash)

seq=3 AssistantMessageCompleted (full text, tool plans, raw response hash)

seq=4 ToolCallScheduled

seq=5 ToolCallCompleted

seq=6 TurnStarted

…

seq=N RunCompleted | RunFailed | RunCancelled (Merkle root of all priors)

```

Every event carries a `PrevHash` field equal to BLAKE3 over the canonical

CBOR of the previous event. The terminal event commits a Merkle root over

all priors. Tampering with any earlier event breaks both the chain and the

root; `eventlog.Validate` returns `ErrLogCorrupt`.

The full schema lives on the [Event schema](/docs/events) page.

## The determinism contract [#the-determinism-contract]

Starling borrows Temporal's determinism model. The agent loop is allowed

to do exactly two things:

1. Read events from the log.

2. Emit commands that the runtime reifies as new events.

Anything else: wall clock, RNG, HTTP, filesystem: must go through the

`step` package:

```go

now := step.Now(ctx) // recorded once, returned on replay

n := step.Random(ctx) // same idea, deterministic

val, err := step.SideEffect(ctx, "name", func() (T, error) {

// any non-deterministic effect goes here

})

```

Live: append a `SideEffectRecorded` event. Replay: read the recorded

value, skip the closure. The `name` argument is the lookup key — reuse

it for the same logical effect, change it when the effect changes.

## Replay [#replay]

`starling.Replay(ctx, log, runID, agent)` re-executes a recorded run

against the same agent wiring. Every event the loop attempts to emit is

compared to the recording at the matching seq:

* `Kind` mismatch: the loop produced a different event type.

* `Payload` mismatch: same kind, different bytes.

* `Exhausted`: the loop ran past the end of the recording.

Mismatches surface as a typed `*replay.Divergence` carrying `RunID`,

`Seq`, `Kind`, `ExpectedKind`, `Class`, and `Reason`. Wrap with

`errors.Is(err, starling.ErrNonDeterminism)` to detect; use `errors.As`

to get the structured fields.

Replay never calls the provider. Tool execution still runs but reads

recorded results out of the log when wrapped in `step.SideEffect`.

## Resume [#resume]

When a run crashes mid-flight, `(*Agent).Resume(ctx, runID, extra)`

reconstructs the conversation state from the log and re-enters the agent

loop in a new process. Pending tool calls are re-issued under fresh

`CallID`s; the orphaned `ToolCallScheduled` from the prior process stays

in the log for audit. A `RunResumed` seam event marks the boundary.

## Tools [#tools]

A tool is anything implementing `tool.Tool`:

```go

type Tool interface {

Name() string

Description() string

Schema() json.RawMessage // JSON Schema for input

Execute(ctx context.Context, in json.RawMessage) (json.RawMessage, error)

}

```

`tool.Typed[In, Out](name, description, fn)` is the convenience wrapper

that derives the JSON Schema from your input type via reflection. For

non-deterministic tools (HTTP, filesystem, anything beyond pure compute),

wrap the work in `step.SideEffect` so replay returns the recorded result.

## Budgets [#budgets]

Four axes:

| Axis | Where enforced |

| ----------------- | --------------------------------------- |

| `MaxInputTokens` | Pre-call, before every LLM call |

| `MaxOutputTokens` | Mid-stream, on every usage chunk |

| `MaxUSD` | Mid-stream, using per-model prices |

| `MaxWallClock` | `context.WithDeadline` wrapping the run |

A trip emits a `BudgetExceeded` event with `(limit, cap, actual, where)`

and unwinds the run with `RunFailed{ErrorType:"budget"}`. Budgets are

inline runtime checks, not after-the-fact dashboards.

## Backends [#backends]

Three event-log implementations:

* `eventlog.NewInMemory()`: tests, demos, ephemeral CLI tools.

* `eventlog.NewSQLite(path)`: single-host services. WAL mode + per-run

`_txlock=immediate` makes one-writer-many-readers correct.

* `eventlog.NewPostgres(db)`: multi-host services. Per-run advisory

locks serialize appenders by run.

All three satisfy `EventLog` and share the migration contract.

# Event schema (/docs/events)

Wire-format reference for the event log.

## Envelope [#envelope]

Every event is wrapped in the same struct:

```go

type Event struct {

RunID string `cbor:"run_id"` // ULID, per-run identifier

Seq uint64 `cbor:"seq"` // monotonic per run, starts at 1

PrevHash []byte `cbor:"prev_hash"` // BLAKE3 of canonical CBOR of prev event

Timestamp int64 `cbor:"ts"` // unix nanoseconds (from step.Now)

Kind Kind `cbor:"kind"` // discriminator

Payload cborenc.RawMessage `cbor:"payload"` // kind-specific struct, CBOR-encoded

}

```

Hash chain: `ev.PrevHash = BLAKE3(CanonicalCBOR(prevEvent))`. The first

event has empty `PrevHash`. Canonical CBOR follows RFC 8949 §4.2:

shortest integer form, sorted map keys, no indefinite-length items,

shortest float that round-trips.

## Event kinds [#event-kinds]

Closed set. Adding a kind is a schema-version bump.

### Emitted by the runtime today [#emitted-by-the-runtime-today]

| # | Kind | Emitted by |

| -- | --------------------------- | ------------------------------------------- |

| 1 | `RunStarted` | First event of every run. |

| 2 | `UserMessageAppended` | `Resume` injecting an extra message. |

| 3 | `TurnStarted` | `step.LLMCall` pre-call. |

| 4 | `ReasoningEmitted` | Provider reasoning blocks (optional). |

| 5 | `AssistantMessageCompleted` | `step.LLMCall` on `ChunkEnd`. |

| 6 | `ToolCallScheduled` | `step.CallTool` / `CallTools` pre-dispatch. |

| 7 | `ToolCallCompleted` | Tool returned successfully. |

| 8 | `ToolCallFailed` | Tool returned an error. |

| 9 | `SideEffectRecorded` | `step.Now`, `Random`, `SideEffect`. |

| 10 | `BudgetExceeded` | Budget axis tripped. |

| 12 | `RunCompleted` | Terminal: successful run. |

| 13 | `RunFailed` | Terminal: error path. |

| 14 | `RunCancelled` | Terminal: ctx cancelled. |

| 15 | `RunResumed` | Non-terminal seam from `Resume`. |

### Reserved (defined in schema, not emitted by core) [#reserved-defined-in-schema-not-emitted-by-core]

| # | Kind | Notes |

| -- | ------------------ | ----------------------------------------------------------------------------------------- |

| 11 | `ContextTruncated` | Reserved for context-window trim strategies. Validate accepts; core does not emit. |

| 16 | `TurnFailed` | Reserved for mid-turn streaming failure with retry. Validate accepts; core does not emit. |

## Payloads [#payloads]

Payloads are CBOR-encoded structs. The highlights:

### `RunStarted` (kind 1) [#runstarted-kind-1]

Pins the entire deterministic surface of the run.

```go

type RunStarted struct {

SchemaVersion uint32

Goal string

ProviderID string

ModelID string

APIVersion string

ParamsHash []byte

Params cborenc.RawMessage

SystemPromptHash []byte

SystemPrompt string

ToolRegistryHash []byte

ToolSchemas []ToolSchemaRef

Budget *BudgetLimits

StarlingVersion string // linked module version

AppVersion string // caller-supplied

}

```

### `TurnStarted` / `AssistantMessageCompleted` (kinds 3, 5) [#turnstarted--assistantmessagecompleted-kinds-3-5]

```go

type TurnStarted struct {

TurnID string

PromptHash []byte

InputTokens int64

}

type AssistantMessageCompleted struct {

TurnID string

Text string

ToolUses []PlannedToolUse

StopReason string

InputTokens int64

OutputTokens int64

CacheReadTokens int64

CacheCreateTokens int64

CostUSD float64

RawResponseHash []byte // BLAKE3 of canonicalized provider response

ProviderRequestID string

}

```

### `ReasoningEmitted` (kind 4) [#reasoningemitted-kind-4]

Optional. Anthropic-only fields are populated only when the provider

returned them; OpenAI reasoning summaries arrive without a signature.

```go

type ReasoningEmitted struct {

TurnID string

Content string

Sensitive bool

Signature []byte // Anthropic per-block integrity signature

Redacted bool // true when Content is the opaque redacted_thinking payload

}

```

### `ToolCallScheduled` / `Completed` / `Failed` (kinds 6, 7, 8) [#toolcallscheduled--completed--failed-kinds-6-7-8]

```go

type ToolCallScheduled struct {

CallID string

TurnID string

ToolName string

Args cborenc.RawMessage

Attempt uint32

IdempKey string

}

type ToolCallCompleted struct {

CallID string

Result cborenc.RawMessage

DurationMs int64

Attempt uint32

}

type ToolCallFailed struct {

CallID string

Error string

ErrorType string // "timeout" | "panic" | "tool" | "cancelled"

DurationMs int64

Attempt uint32

}

```

Retries share `CallID` with incrementing `Attempt`.

### `SideEffectRecorded` (kind 9) [#sideeffectrecorded-kind-9]

Captures any non-deterministic value the agent loop consumed. `step.Now`

emits one with `Name: "now"`; `step.Random` with `"rand"`; user calls

to `step.SideEffect` set their own name.

```go

type SideEffectRecorded struct {

Name string

Value cborenc.RawMessage

}

```

### `BudgetExceeded` (kind 10) [#budgetexceeded-kind-10]

```go

type BudgetExceeded struct {

Limit string // "input_tokens" | "output_tokens" | "usd" | "wall_clock"

Cap float64

Actual float64

Where string // "pre_call" | "mid_stream" | "post_call"

TurnID string

CallID string

PartialText string

PartialTokens int64

}

```

### `RunCompleted` / `Failed` / `Cancelled` (kinds 12, 13, 14) [#runcompleted--failed--cancelled-kinds-12-13-14]

All three terminals carry a `MerkleRoot []byte` over the BLAKE3 hashes

of every prior event. `RunCompleted` adds totals (turn count, tool-call

count, USD, tokens, duration). `RunFailed` adds an error string and

classification. `RunCancelled` adds a reason.

### `RunResumed` (kind 15) [#runresumed-kind-15]

```go

type RunResumed struct {

AtSeq uint64 // last seq from the prior process

ExtraMessage string

ReissueTools bool

PendingCalls int

}

```

A seam, not a terminal. Marks the boundary between a crashed run's

prior process and its resuming process. Validation pairing rules treat

it as a reset point: orphaned tool-call schedules from before the

seam don't need outcomes after it.

orphan"):::orphan

end

SCH --> X("crash"):::crash

subgraph p2["Process 2 · resume"]

direction LR

SR("RunResumed · seam"):::accent --> SCH2("ToolCallScheduled

fresh CallID")

SCH2 --> CC("ToolCallCompleted")

CC --> RC("RunCompleted")

end

X -.-> SR

classDef accent fill:#cffafe,stroke:#06b6d4,color:#0f172a,stroke-width:1.5px;

classDef crash fill:#fee2e2,stroke:#f43f5e,color:#0f172a,stroke-width:1.5px;

classDef orphan fill:#fef3c7,stroke:#f59e0b,color:#0f172a,stroke-dasharray:4 3;

`"

/>

## Invariants [#invariants]

`eventlog.Validate` enforces:

1. Slice non-empty, `events[0].Seq == 1`, monotonic seq with no gaps.

2. `RunID` consistent and non-empty across all events.

3. Hash chain unbroken: each `PrevHash` equals BLAKE3 of canonical

CBOR of the previous event.

4. Exactly one terminal event, and it's the last event.

5. First event is `RunStarted` with `SchemaVersion ∈ [1, current]`.

6. **Turn pairing.** Every `TurnStarted` is closed by a same-`TurnID`

`AssistantMessageCompleted` or `BudgetExceeded` before the next

`TurnStarted`. An open turn at the terminal is allowed only when

the terminal is `RunFailed` or `RunCancelled`.

7. **Call pairing.** Every `ToolCallScheduled` has exactly one matching

`ToolCallCompleted` or `ToolCallFailed` with the same `(CallID,

Attempt)`. Outcomes without a prior schedule, or duplicate outcomes

for the same key, are rejected. A `RunResumed` seam clears pending

pairing state.

8. Merkle root in the terminal payload matches the recomputed BLAKE3

Merkle root over every pre-terminal event.

Invalid log → `ErrLogCorrupt` with a diagnostic locating the violation.

## Worked example [#worked-example]

A run with one LLM turn, two parallel tool calls, then a final summary

turn:

```text

seq=1 RunStarted prev=nil

seq=2 TurnStarted prev=H(seq1) turn=T1

seq=3 AssistantMessageCompleted prev=H(seq2) turn=T1, tool_uses=[C1, C2]

seq=4 ToolCallScheduled prev=H(seq3) call=C1, attempt=1

seq=5 ToolCallScheduled prev=H(seq4) call=C2, attempt=1

seq=6 ToolCallCompleted prev=H(seq5) call=C2, attempt=1

seq=7 ToolCallCompleted prev=H(seq6) call=C1, attempt=1

seq=8 TurnStarted prev=H(seq7) turn=T2

seq=9 AssistantMessageCompleted prev=H(seq8) turn=T2, no tool_uses

seq=10 RunCompleted prev=H(seq9) merkle_root=M(seq1..seq9)

```

Parallel tool completions land in arrival order: replay reproduces

that ordering deterministically because results are read from the log,

not re-executed.

## Size expectations [#size-expectations]

Order of magnitude per event:

| Kind | Typical size |

| ----------------------------- | ------------ |

| `RunStarted` | 1–10 KB |

| `TurnStarted` | under 1 KB |

| `AssistantMessageCompleted` | 2–50 KB |

| `ToolCallScheduled/Completed` | 1–20 KB each |

| Terminals | under 1 KB |

A typical 5-turn run with 10 tool calls is \~100–500 KB. The retention

patterns are documented under [Operations](/docs/operations).

## Schema evolution [#schema-evolution]

Pre-1.0: any change permitted, schema-version bump on breaks.

After 1.0:

* Additive (new optional fields, new kinds) → minor bump.

* Breaking → major bump.

* Replayer refuses logs whose schema version is newer than its own major.

* Old logs remain replayable forever: pin the binary version, or use the

migration tools shipped at the major bump.

# Welcome (/docs)

A Go runtime for building LLM agents where every run is an append-only,

BLAKE3-chained, Merkle-rooted event log. When an agent fails in production,

inspect the log, replay it against the same agent wiring, and see the exact

step where today's behavior diverges.

## For AI assistants [#for-ai-assistants]

[**/llms-full.txt**](/llms-full.txt) packs the whole docs into one file.

Paste it into your assistant's context and it will know every type,

signature, and convention in the runtime.

## Get started [#get-started]

## Build [#build]

## Operate [#operate]

## Reference [#reference]

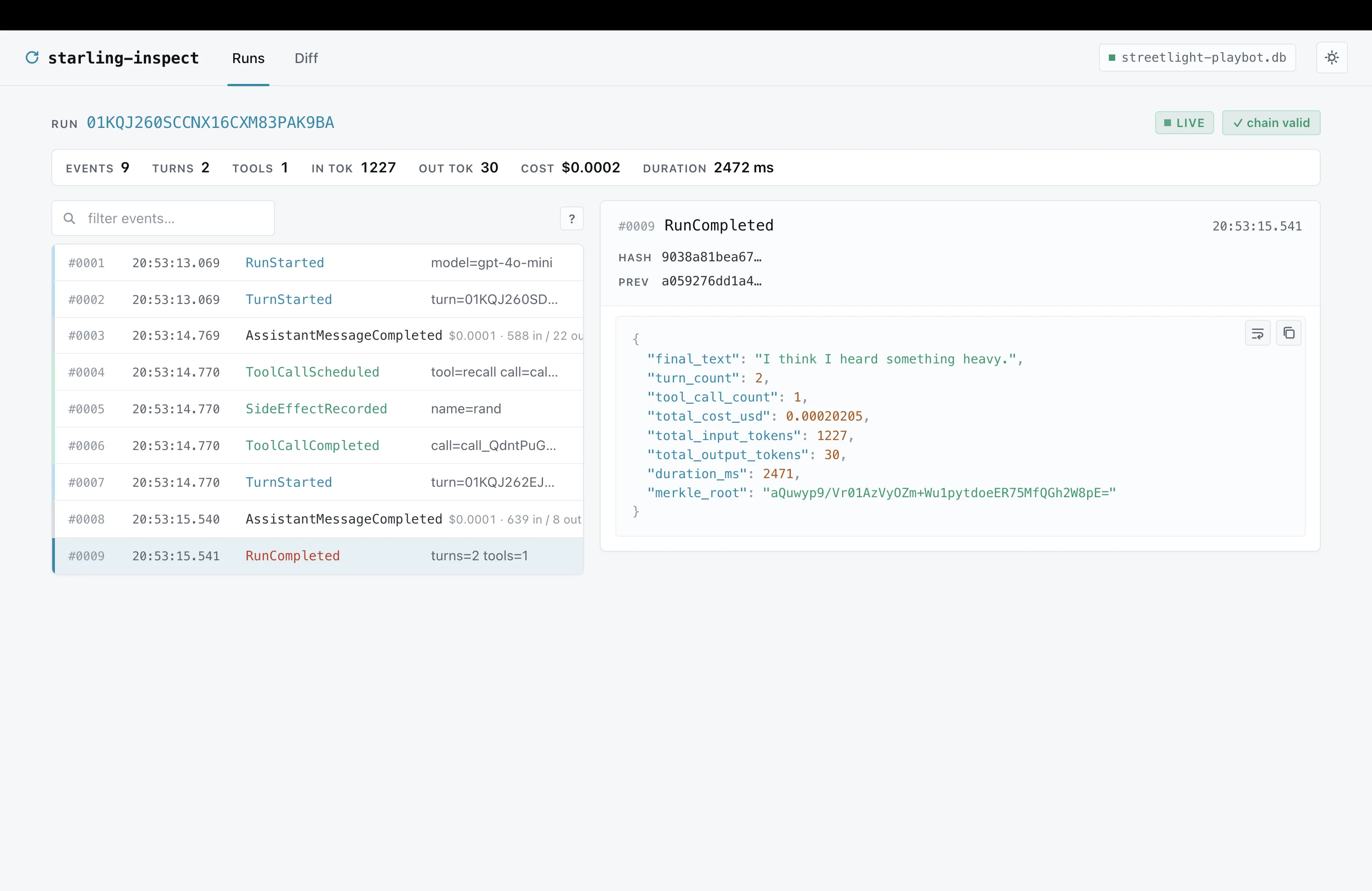

# Inspector (/docs/inspector)

`starling-inspect` opens a SQLite event log read-only and serves a

self-contained UI on loopback. No CDN, no JS build, never writes

back to the log.

```bash title="terminal"

go run github.com/jerkeyray/starling/cmd/starling-inspect runs.db

```

`--help` lists every flag. For non-local access, see

[Operations → Inspector auth and TLS](/docs/operations#inspector-auth-and-tls).

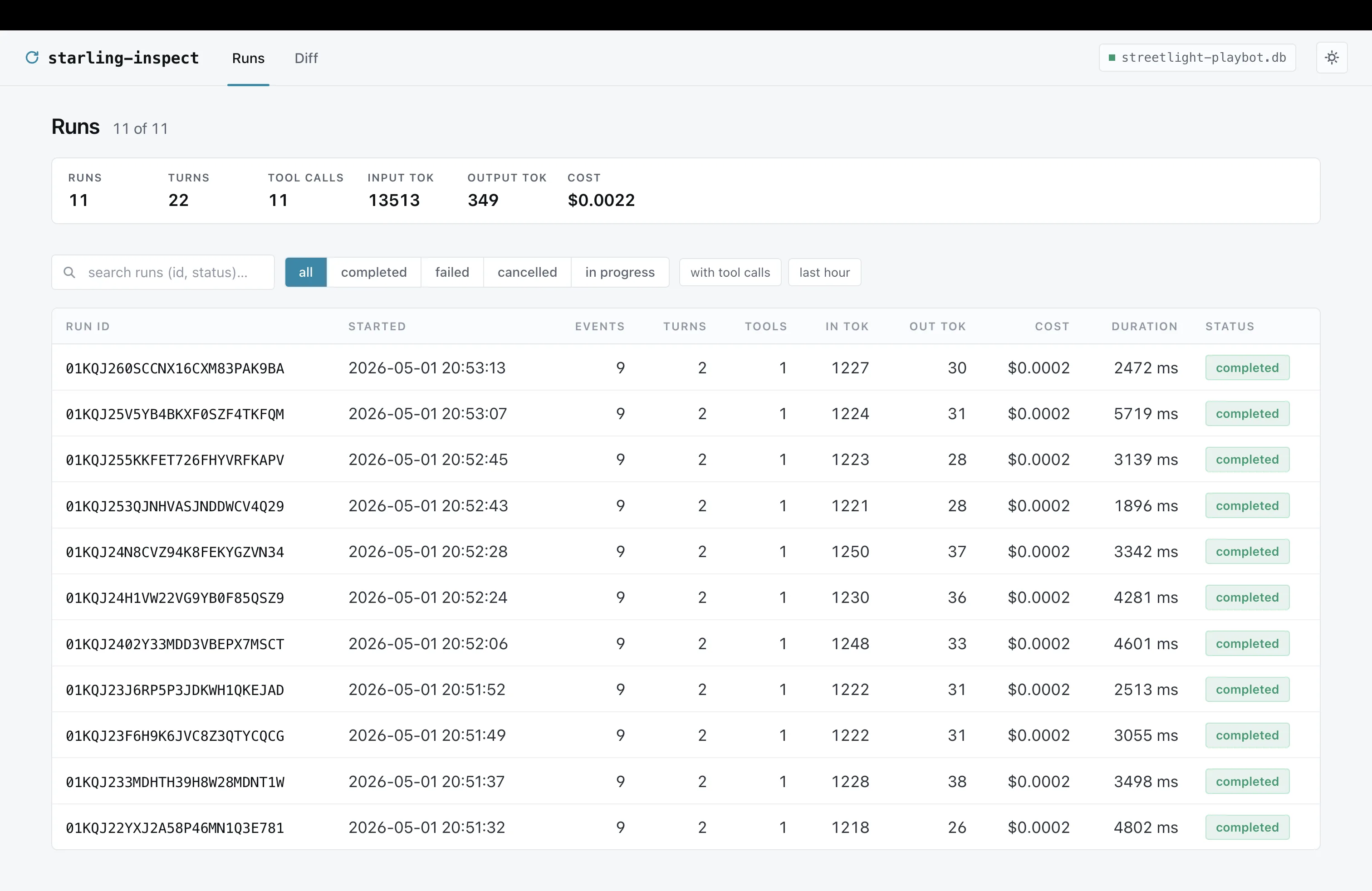

## Runs list [#runs-list]

Per-run totals up top, status tabs and preset chips for narrowing,

substring search on run id (`/` focuses). The runs list is paged on

the server: 50 rows by default, up to 200 with `?per_page=200`.

Filters and search terms are preserved when paging. Totals reflect the

currently visible page, while the pager shows the full matching count.

## Run detail [#run-detail]

Per-run totals up top, status tabs and preset chips for narrowing,

substring search on run id (`/` focuses). The runs list is paged on

the server: 50 rows by default, up to 200 with `?per_page=200`.

Filters and search terms are preserved when paging. Totals reflect the

currently visible page, while the pager shows the full matching count.

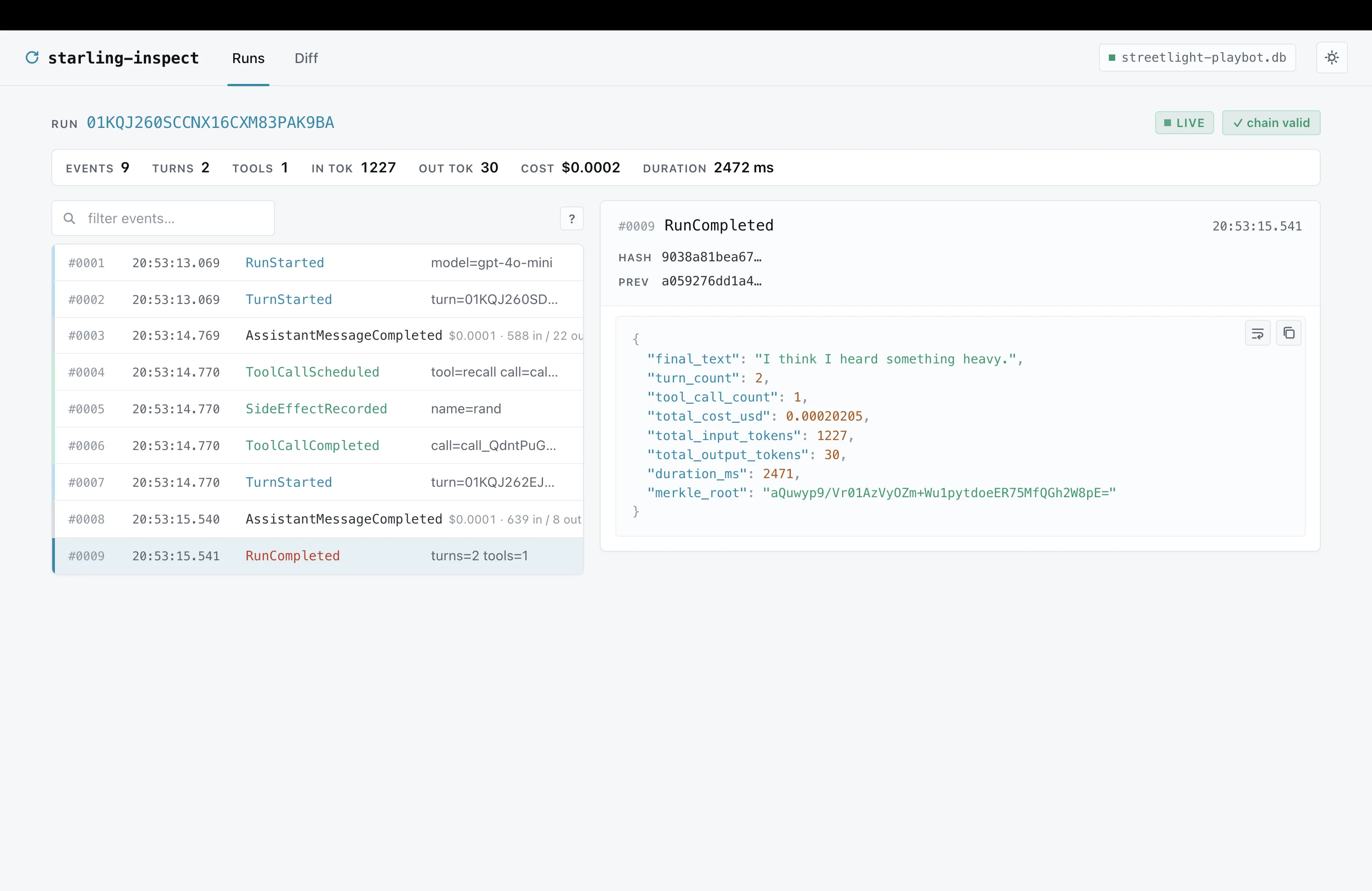

## Run detail [#run-detail]

Timeline on the left (color-coded by event family, inline

cost/token chips). Detail pane on the right with a sticky meta

header - hash, prev hash, call id are click-to-copy - and a

syntax-highlighted JSON body that wraps long strings by default.

Press `?` in the timeline header for the keyboard shortcuts.

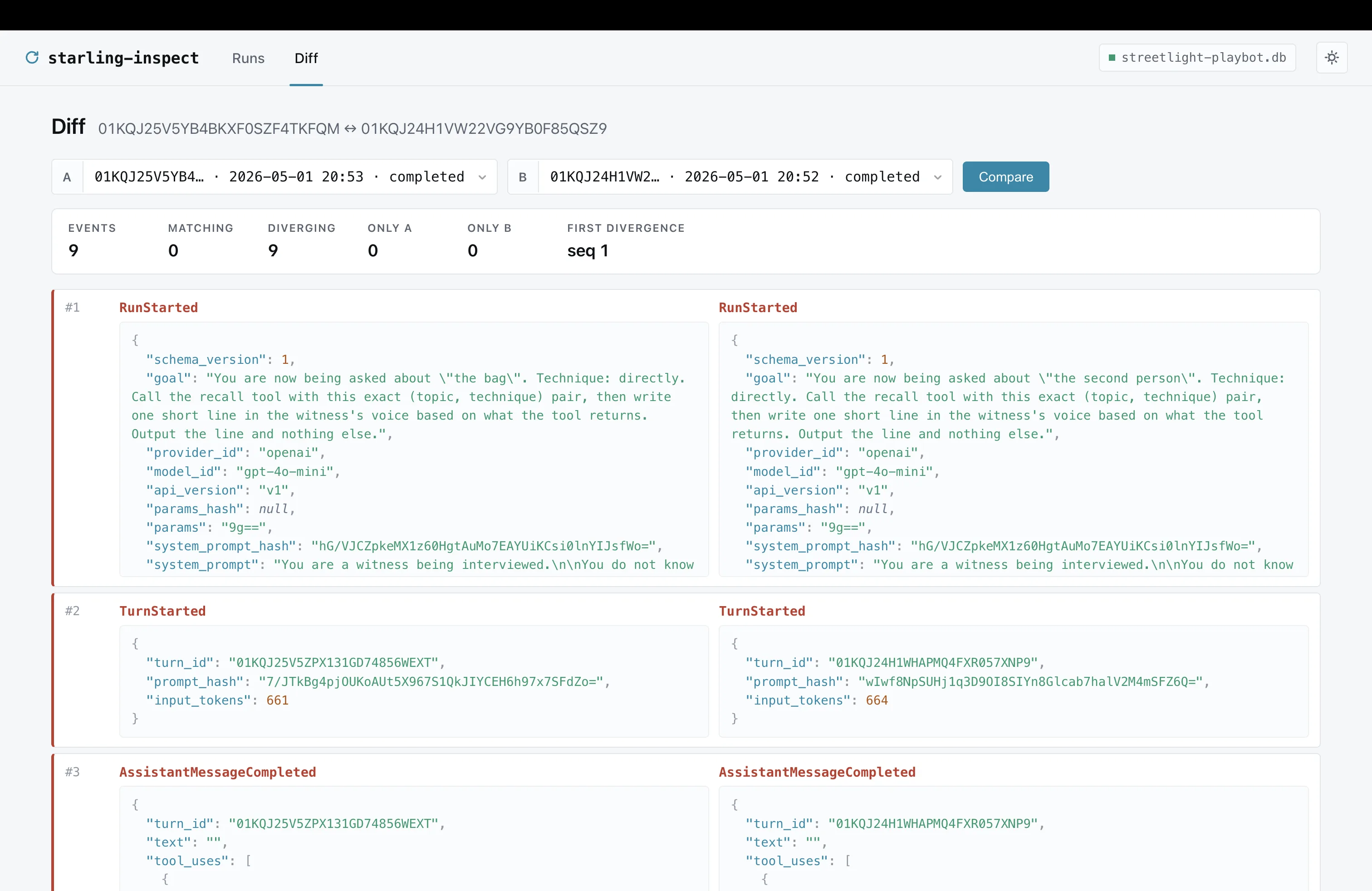

## Diff [#diff]

Timeline on the left (color-coded by event family, inline

cost/token chips). Detail pane on the right with a sticky meta

header - hash, prev hash, call id are click-to-copy - and a

syntax-highlighted JSON body that wraps long strings by default.

Press `?` in the timeline header for the keyboard shortcuts.

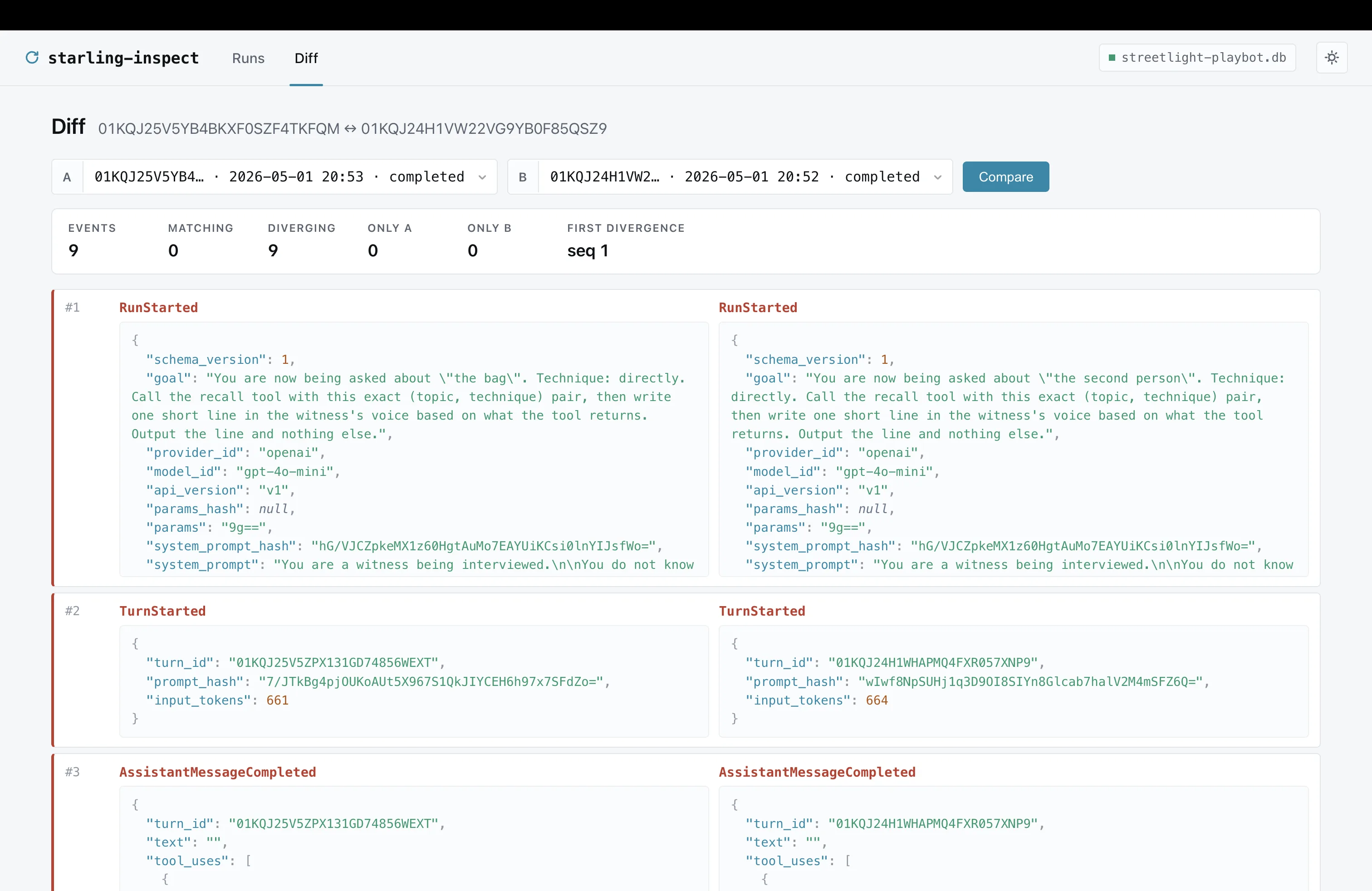

## Diff [#diff]

`/diff` aligns two runs by sequence. Pick A and B from the latest 100

runs in the dropdowns, hit Compare. Older runs can still be compared by

opening `/diff?a={runIDA}&b={runIDB}` directly. Diverging rows get a red

left rail, a summary strip up top counts matches/diffs and points at

the first divergence.

## Replaying a run [#replaying-a-run]

A **Replay** button shows up on the run page when the inspector is

built into a dual-mode binary that wires

`starling.InspectCommand(factory)`. It opens a side-by-side

recorded-vs-reproduced timeline; divergence surfaces as a toast

plus a click-through dialog. The source log is never written to.

```go title="cmd/myagent/main.go"

if len(os.Args) > 1 && os.Args[1] == "inspect" {

return starling.InspectCommand(myFactory).Run(os.Args[2:])

}

```

## Embedding the inspector [#embedding-the-inspector]

If you want to mount the inspector in a larger HTTP server, build it

directly:

```go

import "github.com/jerkeyray/starling/inspect"

srv, err := inspect.New(log,

inspect.WithAuth(inspect.BearerAuth(os.Getenv("STARLING_INSPECT_TOKEN"))),

inspect.WithReplayer(myFactory), // optional: enables Replay button

inspect.WithDBPath("/var/lib/runs.db"), // optional: shows the DB basename in the topbar context chip

)

if err != nil { return err }

http.Handle("/runs/", http.StripPrefix("/runs", srv))

```

`WithDBPath` populates a small chip in the inspector topbar with

the database's basename (full path on hover) so operators can see

which log the UI is pointing at when several inspectors run side

by side. The standalone CLI passes it automatically.

- [Operations](/docs/operations): Deploy the inspector behind TLS, bearer auth, retention.

- [Replay tests](/docs/replay-tests): Drive the divergence machinery from Go tests.

# MCP server (inbound) (/docs/mcp-server)

This page is the inbound MCP **server** - AI assistants querying

your event log. For the outbound **client** that lets agents call

external MCP servers as tools, see [MCP tools](/docs/mcp-tools).

`starling-mcp` exposes a recorded event log to AI assistants over the

Model Context Protocol. Once installed, your AI client (Claude

Desktop, Cursor, Claude Code) can answer natural-language questions

about your agent's runs without you copy-pasting JSON.

The server is read-only by construction: the SQLite handle is opened

with `eventlog.WithReadOnly`, and only read tools are registered. It

runs as a stdio subprocess of the AI client; nothing opens a network

port.

## Install [#install]

```bash

go install github.com/jerkeyray/starling/cmd/starling-mcp@latest

```

Or build from source:

```bash

go build -o ~/bin/starling-mcp ./cmd/starling-mcp

```

The `starling` CLI also bundles it as a subcommand:

`starling mcp ` is identical.

## Wire into an MCP client [#wire-into-an-mcp-client]

**Claude Desktop** - `~/Library/Application Support/Claude/claude_desktop_config.json`

on macOS:

```json

{

"mcpServers": {

"starling": {

"command": "starling-mcp",

"args": ["/Users/me/.hearsay/saves/playbot.db"]

}

}

}

```

**Cursor** - `~/.cursor/mcp.json` (same shape).

**Claude Code** - add via `claude mcp add starling starling-mcp /path/to/runs.db`.

Restart the client. The server starts when the client first

connects, exits when the client disconnects, and re-reads the DB

file on every tool call so new events are visible immediately.

## Tools [#tools]

Seven read-only tools. All return JSON; arguments are typed.

| Tool | What it does |

| --------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| `list_runs` | Enumerate recorded runs newest-first. Filters: `status`, `query` (substring match on run id + status), `since` (RFC3339), `with_tool_calls`. Page with `limit` (default 50, max 200) + `offset`. |

| `get_run` | Return the run summary plus every event with hashes and decoded payloads. Caps at 1000 events; use `offset` to page when truncated. |

| `get_event` | One event by `(run_id, seq)`, with hash, prev\_hash, kind, decoded payload. |

| `summarize_run` | Turn count, tool-call count, in/out tokens, USD cost, duration, terminal kind, final assistant text. |

| `validate_run` | Verify the BLAKE3 hash chain. Returns `{ok: true}` on a clean chain, otherwise `{ok: false, reason: "..."}`. |

| `diff_runs` | Align two runs by sequence and classify each row as `match`, `diff`, `only-a`, or `only-b`. Includes the first-divergence sequence number. |

| `search_runs` | Naive substring/kind scan across a bounded run page. Requires at least one of `query` or `kind`; cap hits with `limit` (default 50, max 500), scanned runs with `run_limit` (default 200, max 1000), and inspected events with `max_examined_events` (default 10000, max 50000). Returns `{run_id, seq, kind, summary}` per hit plus scan counters. |

## Sample interactions [#sample-interactions]

> **You:** What runs are in the database?

>

> **Claude:** *(calls `list_runs`)*

> Five runs, newest first. Three completed, one failed

> (`max_turns` at 14:32:07), one cancelled. Total cost $0.0089.

> **You:** Summarize the failed one.

>

> **Claude:** *(calls `summarize_run` with the failed run's id)*

> Run `01HZ...` failed after 5 turns and 2 tool calls,

> $0.0021, 1247 input / 38 output tokens, ran for 8.4 seconds.

> The final assistant text was empty - terminal kind is RunFailed

> with error\_type `max_turns`.

> **You:** Was there a tool that errored?

>

> **Claude:** *(calls `search_runs` with `kind=ToolCallFailed`)*

> Two `ToolCallFailed` events. Both in run `01HZ...`, both for the

> `fetch` tool with `error_type=tool` and message

> `upstream 503: service unavailable`. Same call id `c4` retried

> twice before final failure.

> **You:** Show me event 5 of that run.

>

> **Claude:** *(calls `get_event` with `seq=5`)*

> AssistantMessageCompleted at 14:32:05.882, payload says it

> planned to call `fetch` with `{url: "https://..."}`, stop\_reason

> `tool_use`, 421 input + 18 output tokens, hash

> `9038a81bea67…`.

The model picks which tool to call. You ask a normal question.

## Read-only by construction [#read-only-by-construction]

Three layers of defence:

1. **Storage layer.** `eventlog.NewSQLite(path,

eventlog.WithReadOnly())` - `Append` returns `ErrReadOnly`.

2. **Tool surface.** The server registers no write tools. There is

no `add_event`, no `prune`, no `migrate`. Even a malicious

client can't ask for what isn't there.

3. **Construction.** `mcpsrv.New` accepts an `eventlog.EventLog`,

not a path; the binary opens the read-only handle before

handing it off.

## Limits and gotchas [#limits-and-gotchas]

* **`search_runs` is naive.** It walks every event of every run

matching the run page; cost is `O(runs × events)`. It requires

either `query` or `kind`, pages runs with `run_limit` /

`run_offset`, caps hits with `limit`, and stops once

`max_examined_events` is reached. Responses include

`runs_examined`, `total_matching_runs`, `runs_capped`, and

`scan_capped`. Real indexes are a future pass.

* **`get_run` event cap.** A single run can have thousands of

events with long assistant text. Default cap is 200 events per

call; the response includes `truncated: true` and a

`total_events` count so the model knows to page. Built-in log

backends page events in storage; custom logs fall back to `Read`

plus in-memory slicing.

* **No streaming.** MCP tools are request/response. To watch a run

unfold turn-by-turn, use the [inspector](/docs/inspector) - its

SSE timeline is the right surface for that.

* **One DB per server.** Configure multiple `mcpServers` entries in

your client to query multiple databases.

## See also [#see-also]

- [MCP tools (outbound)](/docs/mcp-tools): The other half: agents calling external MCP servers as tools.

- [Inspector](/docs/inspector): Web UI for the same event log. SSE-streamed live timeline.

- [Events](/docs/events): The event Kinds whose payloads get_event decodes.

# MCP tools (outbound client) (/docs/mcp-tools)

This page is the outbound MCP **client** - agents calling external

MCP servers. For the inbound **server** that lets AI assistants

query your recorded event log, see [MCP server](/docs/mcp-server).

`tool/mcp` adapts [Model Context Protocol](https://modelcontextprotocol.io)

servers onto `tool.Tool`. The core runtime stays MCP-agnostic.

## Three transports [#three-transports]

```go

import (

"os/exec"

mcptool "github.com/jerkeyray/starling/tool/mcp"

)

// 1. stdio subprocess server.

client, err := mcptool.NewCommand(ctx,

exec.Command("uvx", "mcp-server-filesystem", "/tmp"),

mcptool.WithToolNamePrefix("fs_"),

)

// 2. streamable HTTP server.

client, err = mcptool.NewHTTP(ctx, "https://mcp.example.com/sse", nil)

// 3. any custom mcp.Transport.

client, err = mcptool.New(ctx, transport)

```

Each constructor lists the server's tools at connect time and caches them.

Call `client.Tools(ctx)` to retrieve `[]tool.Tool` for use with `Agent`,

or `client.RefreshTools(ctx)` to re-list.

## Wire into an agent [#wire-into-an-agent]

```go

client, err := mcptool.NewCommand(ctx,

exec.Command("uvx", "mcp-server-filesystem", "/tmp"),

mcptool.WithToolNamePrefix("fs_"),

mcptool.WithCallTimeout(10*time.Second),

)

if err != nil {

panic(err)

}

defer client.Close()

mcpTools, err := client.Tools(ctx)

if err != nil {

panic(err)

}

a := &starling.Agent{

Provider: prov,

Tools: append(localTools, mcpTools...),

Log: log,

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 8},

}

```

## Options [#options]

| Option | Purpose |

| ---------------------------------- | ---------------------------------------------------------------- |

| `WithClientInfo(name, version)` | Override the client identity sent on `initialize`. |

| `WithToolNamePrefix(p)` | Namespace remote tools: useful when mounting multiple servers. |

| `WithIncludeTools(...)` | Restrict to the named remote tools. |

| `WithExcludeTools(...)` | Drop the named remote tools. |

| `WithCallTimeout(d)` | Per-call deadline. Zero leaves cancellation to the caller's ctx. |

| `WithMaxOutputBytes(n)` | Cap the JSON-encoded result. Defaults to 1 MiB. |

| `WithTextOnly(true)` | Reject non-text content rather than forwarding it. |

| `WithTransientErrorClassifier(fn)` | Classify transport errors as `tool.ErrTransient` for retries. |

## Replay safety [#replay-safety]

Each MCP tool call goes through `step.SideEffect` keyed on

`mcp/`. The first live invocation contacts the server,

records the result as a `SideEffectRecorded` event, and returns. Replay

reads the recorded value out of the log and never re-contacts the server.

The same applies under `(*Agent).Resume`. An orphaned MCP call from a

crashed run is reissued under a fresh `CallID`; the new live invocation is

recorded as its own SideEffect.

This means your recorded runs are portable. You can replay them on a

machine that has no network access to the MCP server.

## Tool errors vs transport errors [#tool-errors-vs-transport-errors]

The MCP protocol distinguishes two failure modes; Starling preserves both.

**Server returned `IsError: true`.** The remote tool ran and decided the

call failed (bad input, business-rule rejection). `Execute` returns a

typed `*mcptool.ToolError` carrying the server's content. Use `errors.As`

to inspect.

**Transport or protocol error.** Connection refused, timeout, malformed

JSON-RPC, etc. `Execute` returns the underlying error wrapped with the

tool name. If `WithTransientErrorClassifier` reports the error retryable,

it's also wrapped with `tool.ErrTransient` so the caller's

`step.ToolCall{Idempotent: true, MaxAttempts: N}` retries kick in.

## What's intentionally not adapted [#whats-intentionally-not-adapted]

`tool/mcp` ships with a deliberately narrow surface:

* **MCP resources**: file-tree-style reads; not yet wired.

* **MCP prompts**: server-provided prompt templates; deferred.

* **MCP sampling**: server asking the client to run a model; deferred.

Tools cover the common ADK-parity case. If you hit a wall on resources or

prompts, file an issue with the use case before building it locally.

# Operations (/docs/operations)

## Process model [#process-model]

Starling is a Go library, not a server. Two common shapes:

1. **Embedded.** The agent runs inside your existing Go service. The

event log is a file (SQLite) or connection (Postgres) the service

already manages. The inspector ships as a separate binary or a

subcommand of your binary, pointed at the same log read-only.

2. **Sidecar inspector.** Agent in service A, inspector in service B

with a read-only handle to the same log. Use this when operators

need debugging access without redeploying the primary service.

There is no required scheduler. `Agent.Run` is a blocking Go call.

## Picking a backend [#picking-a-backend]

| Backend | Use when | Avoid when |

| ---------------------- | ------------------------------------------------------------------------------- | --------------------------------------------------------- |

| `eventlog.NewInMemory` | Tests, ephemeral CLIs. | Anything you might want to replay later. |

| `eventlog.NewSQLite` | Single-host services, edge nodes. WAL + per-run `_txlock=immediate`. | Multi-host writers: SQLite has no cross-host locking. |

| `eventlog.NewPostgres` | Multi-host services, regulated workloads, anything needing PITR or replication. | Workloads where the DB is unavailable for long stretches. |

## Schema migrations [#schema-migrations]

Every `eventlog.NewSQLite` call auto-migrates on open. Postgres callers

run migrations explicitly:

```bash

# CLI (SQLite)

starling migrate /var/lib/starling/log.db

starling schema-version /var/lib/starling/log.db

```

```go

// In-process (any backend)

log, err := eventlog.NewPostgres(db)

if err != nil { return err }

if _, err := eventlog.Migrate(ctx, log); err != nil { return err }

```

`Agent.Run`, `Agent.Resume`, and the inspector run `eventlog.Preflight`

on startup and refuse to operate against a stale or too-new schema.

Disable with `Config.SkipSchemaCheck = true` only in tests.

## SQLite [#sqlite]

```go

log, err := eventlog.NewSQLite("/var/lib/starling/log.db")

```

* WAL mode is on (`PRAGMA journal_mode=WAL`); fsync on commit is

`synchronous=NORMAL`. Set `=FULL` if you need stronger guarantees and

can pay the latency.

* File permissions: `0600`, owned by the agent user.

* One process per file. Multiple processes can read concurrently

(`WithReadOnly`), but only one process should ever write: use

Postgres for multi-writer.

* Backup: `sqlite3 log.db ".backup /tmp/log-backup.db"` while the agent

is running. Restore by stopping the agent, swapping the file, and

restarting.

## Postgres [#postgres]

```go

db, _ := sql.Open("postgres", os.Getenv("DATABASE_URL"))

db.SetMaxOpenConns(8)

log, err := eventlog.NewPostgres(db, eventlog.WithAutoMigratePG())

```

* Postgres ≥ 11 (uses `hashtextextended` for advisory locks).

* Connection pool: size to expected concurrent runs plus headroom for

the inspector.

* Per-run advisory locks (`pg_advisory_xact_lock`) serialize appends

for a given `run_id`. Different runs are independent.

* Backup: standard `pg_dump --table=eventlog_events` for logical

exports; PITR via WAL archiving for hot recovery.

* Restore: `pg_restore` into an empty schema, then run `eventlog.Migrate`.

## Security [#security]

### Threat model [#threat-model]

| Actor | What they can do | What we defend |

| -------- | ------------------------------------------------ | ------------------------------------------------------------------------ |

| Operator | Runs the process, owns the DB and provider keys. | Trusted; the runtime assumes operator code is benign. |

| End user | Supplies goals and conversation messages. | Tool inputs, event payloads, replay determinism. |

| Provider | LLM API the agent talks to. | Stream chunk validation, raw-response hash via `RequireRawResponseHash`. |

### What the hash chain does and does not prove [#what-the-hash-chain-does-and-does-not-prove]

**Proves:** events were appended in a specific order; no event was

modified after append; replays match recorded byte-exact behavior.

**Does not prove:** that the operator wrote the truth into the log

(an operator can construct any valid run); that the provider returned

a specific response (`RawResponseHash` is a BLAKE3 digest computed

by the adapter over the SDK-level response, not a vendor signature);

that the agent ran on the claimed wall-clock time (`Timestamp` comes

from `step.Now`).

For cross-process attestation, sign the terminal `RunCompleted`

payload externally: the Merkle root is the natural signing target.

### Inspector auth and TLS [#inspector-auth-and-tls]

For the user-facing tour (UI, keyboard shortcuts, diff page,

theme), see [Inspector](/docs/inspector). This section covers the

deployment posture only.

Bearer auth via `inspect.WithAuth(inspect.BearerAuth(token))` or the

`STARLING_INSPECT_TOKEN` env var. CSRF protection is always on for

state-changing routes (replay POSTs); the inspector plants an

`X-CSRF-Token` cookie on safe responses.

The inspector's HTTP server is plain HTTP. **Always front it with a

TLS-terminating reverse proxy** for non-loopback access:

```nginx

server {

listen 443 ssl;

ssl_certificate /etc/ssl/starling.pem;

ssl_certificate_key /etc/ssl/starling.key;

location / {

proxy_pass http://127.0.0.1:8080;

proxy_http_version 1.1;

proxy_buffering off; # required for SSE

proxy_read_timeout 1h;

}

}

```

For mTLS, use `ssl_verify_client on` at the proxy. The inspector itself

does not consume client certs: the proxy decision is authoritative.

### Secrets [#secrets]

| Secret | Where | Notes |

| ------------------------ | ----------------------- | -------------------------------------------------------------------------------------------------------------------- |

| Provider API keys | `Agent.Provider` config | Pass via env, not source. Never log. |

| `STARLING_INSPECT_TOKEN` | Env var | Rotate on operator changes. |

| Postgres DSN | Env var | Use a role with minimum privileges (writer: `SELECT, INSERT`; retention job: `SELECT, DELETE`; inspector: `SELECT`). |

### Sensitive event payloads [#sensitive-event-payloads]

Payloads carry full conversations, reasoning, and tool I/O. Treat the

DB as PII: encrypt at rest, lock down OS access, never expose the

inspector outside a trusted audience.

No built-in field-level redaction. Redact at the tool boundary.

## Retention [#retention]

The log is append-only. Mutating any event breaks the hash chain for

every later event in the same run.

**Cannot do:** mutate events, delete a single event from a run, reuse

a `run_id`. The unit of deletion is the whole run.

**Can do:**

* Delete whole runs (`DELETE ... WHERE run_id IN (...)`).

* Archive runs to NDJSON via `starling export`, then delete.

* Use `starling prune` for dry-run-first rolling retention on SQLite

logs.

* Partition by time (Postgres `DROP PARTITION`, `pg_partman` or a cron

job rolling monthly partitions).

* Filter the inspector view by `RunSummary.StartedAt` instead of

deleting.

### Rolling-window deletion [#rolling-window-deletion]

```bash

starling prune --older-than 2160h /var/lib/starling/log.db

starling prune --older-than 2160h --confirm /var/lib/starling/log.db

```

`prune` deletes whole terminal runs only by default. Use `--status` to

target one status and `--limit` to break large cleanup jobs into

smaller batches. Run `VACUUM` during low traffic if you need SQLite to

return freed pages to the filesystem.

For Postgres, call `eventlog.RunPruner` in your maintenance job with a

role that has `SELECT` and `DELETE` on `eventlog_events`, or use time

partitions when you need instant archival drops. Keep inspector roles

read-only.

### PII deletion (right-to-erasure) [#pii-deletion-right-to-erasure]

Maintain an external `user_id → []run_id` index: Starling does not

keep one. On request, prune those whole runs and remove any archived

NDJSON. Don't try to selectively redact within a run; the chain depends

on every event.

## Metrics [#metrics]

`Agent.Metrics = starling.NewMetrics(reg)` registers a Prometheus

collection set on the supplied `prometheus.Registerer`. Highlights:

| Metric | Type | Labels |

| ------------------------------------------- | --------- | ------------------------------ |

| `starling_runs_started_total` | Counter | - |

| `starling_runs_in_flight` | Gauge | - |

| `starling_run_duration_seconds` | Histogram | `status` |

| `starling_run_terminal_total` | Counter | `status`, `error_type` |

| `starling_provider_calls_total` | Counter | `model`, `status` |

| `starling_provider_call_duration_seconds` | Histogram | `model` |

| `starling_provider_tokens_total` | Counter | `model`, `type` |

| `starling_tool_calls_total` | Counter | `tool`, `status`, `error_type` |

| `starling_tool_call_duration_seconds` | Histogram | `tool` |

| `starling_eventlog_appends_total` | Counter | `kind`, `status` |

| `starling_eventlog_append_duration_seconds` | Histogram | `kind` |

| `starling_budget_exceeded_total` | Counter | `axis` |

Wire the `/metrics` handler into your existing HTTP mux:

```go

metrics := starling.NewMetrics(prometheus.DefaultRegisterer)

http.Handle("/metrics", starling.MetricsHandler(prometheus.DefaultGatherer))

```

For direct `Append` callers outside `step.emit`, wrap the log with

`eventlog.WithMetrics(log, observer)` so latency histograms cover

that path too.

## Tracing [#tracing]

OpenTelemetry spans are emitted under the `starling` instrumentation

name. Wire any OTLP exporter:

```go

exp, _ := otlptracegrpc.New(ctx)

provider := sdktrace.NewTracerProvider(sdktrace.WithBatcher(exp))

otel.SetTracerProvider(provider)

```

Expected span tree per run:

```text

agent.run

└── agent.turn × N

├── provider.stream

└── step.tool × M

```

## Failure recovery [#failure-recovery]

A crashed run leaves an open hash chain. Restart the same `runID` with

`Agent.Resume(ctx, runID, "")`:

* If the crash happened mid-turn before `AssistantMessageCompleted`,

Resume reissues pending tool calls under fresh `CallID`s.

* If the assistant turn completed but tools were pending, same path.

* Pass `WithReissueTools(false)` to refuse and inspect manually.

Resume goes through the same `eventlog.Preflight` check as `Run`, so a

stale schema fails fast.

## Operational checklist [#operational-checklist]

* [ ] Backups verified by restoring into a staging DB monthly.

* [ ] Inspector behind TLS + bearer token.

* [ ] Provider API keys in env, not source.

* [ ] DB file/connection user has minimum privileges.

* [ ] `eventlog.Migrate` in your release script (Postgres) or trusted

to run on `NewSQLite`.

* [ ] Metrics scraped; dashboards alert on

`starling_eventlog_append_duration_seconds` p99 and

`starling_provider_call_duration_seconds` p99.

* [ ] Retention policy implemented (rolling window, archive-to-NDJSON,

or partitioning).

* [ ] Security review for tool-side network/filesystem access.

# Quickstart (/docs/quickstart)

Build a single-tool agent, persist it to SQLite, verify replay.

## Install [#install]

```bash title="terminal"

go get github.com/jerkeyray/starling

```

Go 1.26+. SQLite is pure-Go via `modernc.org/sqlite`; no CGo.

## Hello agent [#hello-agent]

```go title="main.go"

package main

import (

"context"

"fmt"

"os"

"time"

starling "github.com/jerkeyray/starling"

"github.com/jerkeyray/starling/eventlog"

"github.com/jerkeyray/starling/provider/openai"

"github.com/jerkeyray/starling/step"

"github.com/jerkeyray/starling/tool"

)

type clockOut struct {

UTC string `json:"utc"`

}

func main() {

prov, err := openai.New(openai.WithAPIKey(os.Getenv("OPENAI_API_KEY")))

if err != nil {

panic(err)

}

// step.Now records the timestamp on the live run and returns the

// recorded value on replay, so the tool stays deterministic.

clock := tool.Typed(

"current_time",

"Return the current UTC time in RFC3339.",

func(ctx context.Context, _ struct{}) (clockOut, error) {

return clockOut{UTC: step.Now(ctx).UTC().Format(time.RFC3339)}, nil

},

)

a := &starling.Agent{

Provider: prov,

Tools: []tool.Tool{clock},

Log: eventlog.NewInMemory(),

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 4},

}

res, err := a.Run(context.Background(), "What is the current UTC time?")

if err != nil {

panic(err)

}

fmt.Println(res.FinalText)

}

```

`tool.Typed` derives the JSON schema from the input type. The agent

records `ToolCallScheduled` + `ToolCallCompleted` around every dispatch.

## Persist the run [#persist-the-run]

Swap the in-memory log for SQLite to keep events on disk:

```go title="main.go"

log, err := eventlog.NewSQLite("starling.db")

if err != nil {

panic(err)

}

defer log.Close()

a := &starling.Agent{

Provider: prov,

Tools: []tool.Tool{clock},

Log: log,

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 4},

}

```

`NewSQLite` opens in WAL mode and auto-migrates. One writer, many readers.

## Replay the run [#replay-the-run]

Once a run is persisted you can re-execute it against the same agent and

verify every emitted event matches the recording byte-for-byte:

```go title="main.go"

import "errors"

if err := starling.Replay(ctx, log, runID, a); err != nil {

if errors.Is(err, starling.ErrNonDeterminism) {

// A tool output, prompt, or model changed since the original run.

// err carries a *replay.Divergence with seq + class fields.

}

panic(err)

}

```

Replay reads recorded LLM and tool outputs from the log and never re-invokes

the provider. If your code path hits `step.Now`, `step.Random`, or

`step.SideEffect`, the recorded values are returned instead. Anything else

is non-deterministic and should be moved behind a `step` helper.

## Inspect a run [#inspect-a-run]

```bash title="terminal"

go run github.com/jerkeyray/starling/cmd/starling-inspect starling.db

```

Embedded read-only web UI: runs list, timeline, payload detail, replay

controls, divergence rendering. Dark by default, click-to-pick diff

page, syntax-highlighted JSON. Full tour in

[Inspector](/docs/inspector).

## Where to next [#where-to-next]

- [Concepts](/docs/concepts): The determinism contract, side effects, and the event log as source of truth.

- [MCP tools (outbound)](/docs/mcp-tools): Add remote MCP server tools as ordinary Starling tools.

- [MCP server (inbound)](/docs/mcp-server): Query your event log from Claude Desktop, Cursor, or Claude Code.

# Reference (/docs/reference)

Per-package types, signatures, and short examples.

## starling (root) [#starling-root]

The agent loop, run lifecycle, and replay surface.

### Agent [#agent]

```go

type Agent struct {

Provider provider.Provider

Tools []tool.Tool

Log eventlog.EventLog

Config Config

Budget *Budget

Metrics *Metrics

Namespace string // optional run-id prefix

}

```

`Agent` holds no per-run state. Two instances pointing at the same log

are interchangeable.

### Config [#config]

| Field | Default | Notes |

| ------------------------ | ---------------- | ------------------------------------------------------------------------- |

| `Model` | required | Provider-specific model id, e.g. `"gpt-4o-mini"`. |

| `MaxTurns` | `0` = ∞ | Caps the ReAct loop. `0` is allowed but not recommended. |

| `SystemPrompt` | `""` | Prepended to every conversation. Captured into `RunStarted`. |

| `Params` | `nil` | Provider-specific param blob (CBOR). Hashed into `RunStarted.ParamsHash`. |

| `RequireRawResponseHash` | `false` | Fail any turn whose `ChunkEnd` lacks a 32-byte raw-response digest. |

| `AppVersion` | `""` | Stamped into `RunStarted` alongside the Starling library version. |

| `EmitTimeout` | `0` = ∞ | Bounds each event-log Append under `context.WithoutCancel`. |

| `SkipSchemaCheck` | `false` | Disables `eventlog.Preflight` on `Run` / `Resume`. Tests only. |

| `Logger` | `slog.Default()` | Structured slog records for run lifecycle. |

### Run / Resume / Replay [#run--resume--replay]

* **`Run(ctx, goal) (*RunResult, error)`** — live entry. Mints a fresh

run id (namespaced when `Namespace != ""`), emits `RunStarted`, runs

the loop, returns the terminal `*RunResult`.

* **`Resume(ctx, runID, extraMessage) (*RunResult, error)`** — re-enters

a run from its last seq. Pending tool calls reissue under fresh

`CallID`s; the orphan stays for audit. `ResumeWith(...opts)` adds

`WithReissueTools(false)` for manual recovery.

* **`Replay(ctx, log, runID, agent, opts...) error`** — re-executes

against the same wiring. Returns `nil` on a clean replay, wraps a

`*replay.Divergence` with `ErrNonDeterminism` on the first

mismatch, or `ErrProviderModelMismatch` when the agent's

`Provider.ID` / `APIVersion` / `Config.Model` disagree with the

recording. `WithForceProvider()` disables the identity check.

* **`RunStream(ctx, goal) (string, <-chan AgentEvent, error)`** —

typed event stream layered over `Stream`. Variants: `TextDelta`,

`ToolCallStarted`, `ToolCallEnded`, `Done`. Channel closes after

a single `Done`.

`RunResult` carries `RunID`, `FinalText`, totals (`TurnCount`,

`ToolCallCount`, `TotalCostUSD`, `InputTokens`, `OutputTokens`,

`Duration`), `TerminalKind`, `MerkleRoot`, and `CacheStats`

(`Hits`, `Misses`, `ReadTokens`, `CreateTokens`). All recoverable

from the log; the struct is a convenience.

### Sentinel errors [#sentinel-errors]

| Error | Meaning |

| -------------------------- | ----------------------------------------------------------------------------------- |

| `ErrNonDeterminism` | Replay diverged from the recording. Wraps `*replay.Divergence`. |

| `ErrPartialToolCall` | `Resume` saw pending tool calls and `WithReissueTools(false)` was set. |

| `ErrRunNotFound` | Resume target run id is absent from the log. |

| `ErrRunAlreadyTerminal` | Resume target ended with a terminal event. |

| `ErrRunInUse` | Another writer already advanced the chain. |

| `ErrSchemaVersionMismatch` | The recording's schema version is unsupported by this binary. |

| `ErrProviderModelMismatch` | Replay agent's Provider.ID / APIVersion / Config.Model disagrees with `RunStarted`. |

## budget [#budget]

`Budget` has four axes; zero on any field disables it. A trip emits

`BudgetExceeded{Limit, Cap, Actual, Where}` and unwinds with

`RunFailed{ErrorType:"budget"}`.

| Axis (field) | Type | When |

| ----------------- | --------------- | ---------------------------------------------------- |

| `MaxInputTokens` | `int64` | Pre-call, before every `step.LLMCall`. |

| `MaxOutputTokens` | `int64` | Mid-stream on every `ChunkUsage`. |

| `MaxUSD` | `float64` | Mid-stream using `budget/prices.go` per-model rates. |

| `MaxWallClock` | `time.Duration` | `context.WithDeadline` wrapping the run. |

`budget.RegisterPricing(model, inPerMtok, outPerMtok)` registers

or overrides per-model USD pricing at runtime; resets the

unknown-model warn-once memo so a stale warning doesn't outlive

the call. Built-in rates ship for major-vendor models in

`budget/prices.go`.

## event [#event]

The wire format. Every event carries:

```go

type Event struct {

RunID string

Seq uint64

PrevHash []byte // BLAKE3 of canonical CBOR of prev event

Timestamp int64 // Unix nanoseconds

Kind Kind

Payload cborenc.RawMessage // kind-specific struct, CBOR-encoded

}

```

The full schema with payload definitions, the kinds the runtime emits,

the reserved kinds, and the invariants live on the [Events](/docs/events) page.

Encoding helpers: `Marshal`, `Unmarshal`, `Hash`, `ToJSON`.

`event.HashSize` is 32. Each typed payload has an `EncodePayload[T]`

helper; each kind has a matching accessor (`AsRunStarted`,

`AsToolCallCompleted`, …).

## eventlog [#eventlog]

```go

type EventLog interface {

Append(ctx, runID, ev) error

Read(ctx, runID) ([]Event, error)

Stream(ctx, runID) (<-chan Event, error)

Close() error

}

```

`RunLister` adds `ListRuns(ctx) ([]RunSummary, error)`. `RunPageLister`

adds `ListRunsPage(ctx, opts) (RunPage, error)` for filtered,

server-side pagination. `RunPruner` adds explicit whole-run retention

cleanup with `PruneRuns(ctx, opts) (PruneReport, error)`. All three

built-in backends implement these optional interfaces.

`RunSummary` carries per-run aggregates (`TurnCount`,

`ToolCallCount`, `InputTokens`, `OutputTokens`, `CostUSD`,

`DurationMs`) so dashboards don't have to re-aggregate event streams.

Helpers: `eventlog.AggregateRun(events)` returns the same totals

over a chained event slice (single source of truth for the

inspector and the MCP server). `eventlog.ForkSQLite(ctx, src,

dst, runID, beforeSeq)` is a WAL-safe SQLite branch via

`VACUUM INTO`, truncating one run's events at a sequence

boundary. The BLAKE3 chain helpers used by `Agent.Run` are public

at [`github.com/jerkeyray/starling/merkle`](https://pkg.go.dev/github.com/jerkeyray/starling/merkle).

### Backends [#backends]

| Constructor | Use when |

| -------------------------- | ---------------------------------------------------------------- |

| `NewInMemory()` | Tests, demos, ephemeral CLI tools. |

| `NewSQLite(path, opts...)` | Single-host services, edge nodes. |

| `NewPostgres(db, opts...)` | Multi-host services. Per-run advisory locks serialize appenders. |

Options: `WithReadOnly()` / `WithReadOnlyPG()` for inspector mode,

`WithAutoMigratePG()` to run migrations on connect.

### Validation, migrations, preflight [#validation-migrations-preflight]

* **`Validate(events)`** — seq monotonicity, hash chain, terminal

placement, Merkle root, and the semantic pairing rules from the

[Event schema](/docs/events).

* **`SchemaVersion(ctx, log)` / `Migrate(ctx, log, opts...)`** —

forward-only migration API. `Migrate` returns a `MigrationReport`.

* **`Preflight(ctx, log)`** — fails fast with `ErrSchemaOutdated` or

`ErrSchemaTooNew`. `Agent.Run`, `Agent.Resume`, and the inspector

all call it unless `Config.SkipSchemaCheck = true`.

* **`WithMetrics(log, obs)`** — wraps any `EventLog` so direct

`Append` callers see the same latency histograms `step.emit` records.

Sentinel errors: `ErrLogClosed`, `ErrLogCorrupt`, `ErrInvalidAppend`,

`ErrReadOnly`, `ErrSchemaOutdated`, `ErrSchemaTooNew`.

## step [#step]

The determinism layer. Anything non-deterministic in the agent loop must

go through `step` so replay can reproduce it byte-for-byte.

### Helpers [#helpers]

```go

func Now(ctx context.Context) time.Time

func Random(ctx context.Context) int64

func SideEffect[T any](ctx context.Context, name string, fn func() (T, error)) (T, error)

```

Live mode runs `fn` and emits a `SideEffectRecorded` event. Replay reads

the recorded value back without invoking `fn`. `T` must be CBOR-serializable.

### LLM calls [#llm-calls]

`LLMCall(ctx, req)` drives a streaming completion through the configured

provider. Emits `TurnStarted`, optional `ReasoningEmitted`, and

`AssistantMessageCompleted`. Enforces input/output/USD budgets inline.

Validates the chunk state machine (no EOF before `ChunkEnd`, no

duplicate `ChunkToolUseStart`, no chunks after `ChunkEnd`).

### Tool dispatch [#tool-dispatch]

```go

type ToolCall struct {

CallID, TurnID, Name string

Args json.RawMessage

Idempotent bool

MaxAttempts int

Backoff func(attempt int) time.Duration

}

```

* **`CallTool(ctx, c)`** — sequential dispatch.

* **`CallTools(ctx, calls)`** — fan-out with a semaphore (cap is

`step.DefaultMaxParallelTools`, 8).

* Retries kick in on `tool.ErrTransient` when `Idempotent` and

`MaxAttempts > 1`. `NewCallID()` mints fresh IDs.

### Replay errors [#replay-errors]

`MismatchError` carries `Seq`, `Kind`, `ExpectedKind`, `Class`

(`"exhausted" | "kind" | "payload" | "turn_id"`), and `Reason`. It

satisfies `errors.Is(ErrReplayMismatch)`. Use `errors.As` for the

structured fields. Other sentinels: `ErrInvalidStream`,

`ErrMissingRawResponseHash`. The replay package lifts these into

`replay.Divergence` (next section).

## tool [#tool]

```go

type Tool interface {

Name() string

Description() string

Schema() json.RawMessage // JSON Schema for input

Execute(ctx, in) (json.RawMessage, error)

}

```

`tool.Typed[In, Out](name, description, fn)` derives the JSON Schema

from `In` via reflection. Errors wrapping `tool.ErrTransient` opt the

call into retry under `step.ToolCall{Idempotent: true, MaxAttempts: N}`.

`tool.Wrap(t Tool, mw ...Middleware) Tool` composes middleware

around `Execute` while passing `Name`, `Description`, and `Schema`

through unchanged. Last middleware passed runs first

(net/http.Handler ordering); short-circuiting middleware can skip

the inner call entirely. Useful for logging, timing, span

injection, request authentication, output redaction.

### Test scaffolding (`starlingtest/`) [#test-scaffolding-starlingtest]

`ScriptedProvider` is a deterministic `provider.Provider` driven

by a slice of canned chunks per turn. Helpers `NewStream`,

`AppendRunStarted`, `AssertReplayMatches`, and

`AssertReplayDiverges` cover the common test shapes without

contacting an LLM.

### MCP adapter (`tool/mcp`) [#mcp-adapter-toolmcp]

Three constructors mount remote MCP tools as ordinary Starling tools:

* **`New(ctx, transport, opts...)`** — any `mcp.Transport`.

* **`NewCommand(ctx, exec.Cmd, opts...)`** — stdio subprocess.

* **`NewHTTP(ctx, endpoint, client, opts...)`** — streamable HTTP.

Each connects, lists remote tools, and exposes them via

`client.Tools(ctx)`. Calls route through `step.SideEffect` so replay

never re-contacts the server. Full options table on the

[MCP tools](/docs/mcp-tools) page. The inbound counterpart - a

read-only MCP **server** that exposes a recorded log to AI clients -

lives at [MCP server](/docs/mcp-server).

### HTTP daemon (`starlingd`) [#http-daemon-starlingd]

`starlingd.Command(factory)` builds a CLI entrypoint for serving your

own agent over HTTP. `starlingd.New(config)` returns an `http.Handler`

for apps that already own server setup. The daemon exposes async run

creation, bounded in-process queueing, SSE streams, read APIs,

Prometheus metrics, bearer auth, and an optional inspector mount. Full

reference lives at [HTTP daemon](/docs/starlingd).

### Built-in tools [#built-in-tools]

`tool/builtin/` ships `Fetch()` (public `http`/`https` only, 15s

timeout, 1 MiB cap, local/private-address and unsafe redirect

rejection) and `ReadFile(baseDir)` (path-escape rejection). Use

directly or as templates.

## provider [#provider]

The streaming-completion abstraction.

```go

type Provider interface {

Info() Info

Stream(ctx, req) (EventStream, error)

}

```

Optional `Capabler` exposes `Capabilities()` so the conformance suite

can skip what the adapter doesn't support. A `Request` carries `Model`,

`SystemPrompt`, `Messages`, `Tools`, `ToolChoice` (`""` | `"auto"` |

`"any"` | `"none"` | tool name), `StopSequences`, `TopK`,

`MaxOutputTokens`, and a vendor-specific `Params` blob.

`EventStream` yields `StreamChunk` values: `ChunkText`,

`ChunkReasoning`, `ChunkRedactedThinking`,

`ChunkToolUseStart/Delta/End`, `ChunkUsage`, `ChunkEnd`. The state

machine is enforced by `step.LLMCall`.

### Adapters [#adapters]

| Package | Use when |

| ---------------------- | ------------------------------------------------------------------------------------------------------------ |

| `provider/openai` | OpenAI, Groq, Together, Ollama, vLLM, LM Studio, Azure, anything else OpenAI-compatible (set `WithBaseURL`). |

| `provider/anthropic` | Messages API. Tool use, extended thinking with signature, prompt caching. |

| `provider/gemini` | Native Google Gemini. |

| `provider/bedrock` | Amazon Bedrock via native `ConverseStream` (AWS SDK v2). |

| `provider/openrouter` | OpenRouter: thin wrapper over the OpenAI adapter with attribution headers. |

| `provider/conformance` | The contract test every adapter passes. |

Each adapter advertises its support set via

`provider.Capabler.Capabilities()`. The conformance suite skips

capability-gated assertions when the adapter reports `false`.

### Error classification [#error-classification]

Adapters wrap underlying SDK / HTTP errors with one of four

sentinels for retry policy via `errors.Is`:

| Sentinel | When |

| ----------------------- | -------------------------------- |

| `provider.ErrRateLimit` | 429 / quota |

| `provider.ErrAuth` | 401 / 403 |

| `provider.ErrServer` | 5xx |

| `provider.ErrNetwork` | DNS / dial / TLS / broken stream |

Helpers: `provider.WrapHTTPStatus(err, status)` annotates by HTTP

status (delegates to `ClassifyTransport` when `status == 0`);

`provider.ClassifyTransport(err)` wraps `net.Error` and

`*url.Error` with `ErrNetwork`. 4xx errors that are neither auth

nor rate-limit pass through unwrapped on purpose - they reflect

caller bugs, not transient conditions.

## replay [#replay]

* **`Verify(ctx, log, runID, agent)`** — headless check. Returns `nil`

on a clean replay or wraps `*Divergence` with `ErrNonDeterminism` on

the first mismatch. `starling.Replay` is a thin wrapper that takes

`*Agent` directly.

* **`Stream(ctx, factory, log, runID)`** — inspector path. Yields a

`ReplayStep` per emitted event so the UI can render recorded vs

produced side-by-side. The final step has `Diverged: true` when the

replay didn't reach the recorded terminal.

`Divergence` carries `RunID`, `Seq`, `Kind`, `ExpectedKind`, `Class`,

`Reason`. `Factory` is `func(ctx) (Agent, error)`.

## inspect [#inspect]

Embedded HTTP handler. Serves the runs list, per-run timeline, event

detail, live tail (SSE), and replay. The standalone binary at

`cmd/starling-inspect` opens any SQLite log read-only.

`inspect.New(log, opts...) (*Server, error)`. Options:

* **`WithAuth(authenticator)`** — protect every endpoint.

* **`BearerAuth(token)`** — convenience `Authenticator`.

* **`WithReplayer(factory)`** — enable replay re-execution.

* **`WithDBPath(path)`** — show the DB basename in the topbar

context chip (full path on hover).

Read-only by construction, CSRF-protected on the replay POST endpoints.

Front it with TLS in production: see [Operations](/docs/operations).

## CLI (`cmd/starling`) [#cli-cmdstarling]

```bash

starling validate [] # hash chain + Merkle check

starling export # NDJSON event dump

starling prune [flags] # dry-run-first retention deletion

starling inspect [flags] # local web inspector (read-only)

starling mcp # read-only MCP server over stdio for AI clients

starling replay # headless replay (dual-mode binary only)

starling migrate [-dry-run] # apply pending schema migrations

starling schema-version # print the current schema version

starling doctor # quick health check: version, env vars, schema, validation

starling version # print the binary's Starling version (also -v / --version)

```

The stock binary is SQLite-only. Building a dual-mode binary that

links your agent factory enables `starling replay` and

`starling inspect` with replay re-execution.

## Examples [#examples]

| Path | What it shows |

| -------------------------- | -------------------------------------------------------------------------------------- |

| `examples/m1_hello` | Minimal hello agent, dual-mode inspector, OTel stdout exporter. |

| `examples/incident_triage` | Multi-tool agent, budgets, Resume, replay regression test, Postgres, Prometheus, OTel. |

# Replay-driven tests (/docs/replay-tests)

The replay model isn't just for production debugging. Treat a recorded

run as a test fixture, and `starling.Replay` becomes a regression test

that fails the moment your agent's logic shifts: refactored a tool,

changed a prompt, swapped a model, upgraded a dependency.

## The shape [#the-shape]

1. Record a real run once, against a real provider.

2. Commit the resulting event log as a test fixture.

3. In CI, replay the fixture against the same agent wiring (no provider

network call). Replay returns `nil` on byte-identical re-execution

and a typed `*replay.Divergence` otherwise.

That's it. No mocks, no recorded HTTP fixtures, no snapshot dance.

## Capture a fixture [#capture-a-fixture]

Run the agent once with a SQLite log under your test data dir:

```go title="capture/main.go"

package main

import (

"context"

"fmt"

"os"

starling "github.com/jerkeyray/starling"

"github.com/jerkeyray/starling/eventlog"

"github.com/jerkeyray/starling/provider/openai"

"github.com/jerkeyray/starling/tool"

)

func main() {

log, err := eventlog.NewSQLite("testdata/golden.db")

if err != nil { panic(err) }

defer log.Close()

prov, err := openai.New(openai.WithAPIKey(os.Getenv("OPENAI_API_KEY")))

if err != nil { panic(err) }

a := newAgent(prov, log) // your Agent constructor

res, err := a.Run(context.Background(), "What is the current UTC time?")

if err != nil { panic(err) }

fmt.Println("captured", res.RunID)

}

```

Run it once, commit `testdata/golden.db` and the captured `runID`. From

this point on you never touch the network for this test.

## Replay in CI [#replay-in-ci]

```go title="agent_test.go"

package agent_test

import (

"context"

"errors"

"testing"

starling "github.com/jerkeyray/starling"

"github.com/jerkeyray/starling/eventlog"

"github.com/jerkeyray/starling/replay"

)

const goldenRunID = "01HZ8PQ5...XKJ3"

func TestAgentMatchesRecording(t *testing.T) {

log, err := eventlog.NewSQLite("testdata/golden.db", eventlog.WithReadOnly())

if err != nil { t.Fatal(err) }

t.Cleanup(func() { _ = log.Close() })

a := newAgent(stubProvider(), log) // any non-nil provider; Replay swaps it

err = starling.Replay(context.Background(), log, goldenRunID, a)

if err == nil {

return // byte-identical

}

var d *replay.Divergence

if errors.As(err, &d) {

t.Fatalf("agent diverged from recording at seq=%d kind=%s class=%s reason=%s",

d.Seq, d.Kind, d.Class, d.Reason)

}

t.Fatal(err)

}

```

`starling.Replay` shallow-clones the Agent and overrides `Provider`

with a synthetic replay provider that yields chunks from the recording.

Your real provider is never contacted. The original `Agent.Provider`

must still be non-nil because `validate()` runs before the swap; any

stub will do.

Tools, on the other hand, **re-execute live**. The tool's `Execute`

method runs and its output bytes are compared to the recorded

`ToolCallCompleted` payload. A deterministic tool replays cleanly. A

tool that reads `time.Now()` directly produces a new timestamp on

replay and diverges. Wrap non-deterministic reads in `step.Now`,

`step.Random`, or `step.SideEffect` so replay returns the recorded

value.

## What "byte-identical" means [#what-byte-identical-means]

Replay compares each event the live loop emits to the recording at the

same `seq`. A divergence falls into one of four classes:

| `Class` | What it means |

| ----------- | --------------------------------------------------------- |

| `kind` | The loop produced a different event type at this `seq`. |

| `payload` | Same kind, different bytes. |

| `turn_id` | A turn started under a different `TurnID` than recorded. |

| `exhausted` | The loop ran past the end of the recording (extra event). |

The first divergence is reported. The recording is the source of truth.

## Make tools replay-safe before you record [#make-tools-replay-safe-before-you-record]

Recording captures the values the loop consumed at `step` boundaries.

Anything outside `step` will diverge on every replay because it isn't

recorded. Common culprits:

* Reading `time.Now()` directly inside a tool. Use `step.Now(ctx)`.

* Calling `rand.Intn` inside a tool or middleware. Use `step.Random(ctx)`.

* Hitting an HTTP endpoint inside a tool without `step.SideEffect`.

* Using `os.Getenv` mid-run. Read once at construction or wrap in a

`step.SideEffect` keyed on the variable name.

If your test passes once and fails on the next run with no code change,

something non-deterministic leaked past `step`.

## What divergence catches [#what-divergence-catches]

Real things that flip the test from green to red:

* A model upgrade that changes tool-plan order.

* A prompt edit that changes `RunStarted.SystemPromptHash`.

* A tool that started returning slightly different JSON (whitespace,

field reordering, added field).

* A schema migration that changed the canonical CBOR shape of a

payload.

* A library upgrade that changed RNG seeding or a cost-table value.

Each is a real signal that today's build does something different from

the day you recorded the fixture.

## Provider / model mismatch [#provider--model-mismatch]

Before any turn replays, `Replay` cross-checks the agent's current

`Provider.ID` / `APIVersion` / `Config.Model` against the values

recorded in the log's `RunStarted` event. If any of the three

disagree, replay fails fast with `starling.ErrProviderModelMismatch`

* typically the "I edited the agent factory and forgot the

fixture is older" mistake, surfaced before the test produces a

confusing turn-1 byte diff.

```go

err := starling.Replay(ctx, log, runID, agent)

if errors.Is(err, starling.ErrProviderModelMismatch) {

t.Fatalf("agent wiring drifted from fixture: %v", err)

}

```

Override when the divergence is intentional (e.g. you re-recorded

the fixture and want the same test file to replay both shapes):

```go

err := starling.Replay(ctx, log, runID, agent, starling.WithForceProvider())

```

The CLI equivalent is `starling replay --force `. With

`--force`, all other replay invariants still apply - chunks, tool

output bytes, step-name lookups - so the only thing relaxed is the

provider/model identity check itself.

## Multiple fixtures [#multiple-fixtures]

Capture more than one run, one per behavior you care about: happy

path, tool-error path, budget trip, multi-turn refinement. Each gets a

test. The fixtures live under `testdata/`; the test file picks a

`runID` per case.

For sprawling fixtures, the `starling export` CLI dumps a run to NDJSON

so you can review what's recorded and trim noise before committing.

## CI shape [#ci-shape]

A typical CI job looks like:

```yaml title=".github/workflows/test.yml"

- name: Replay golden runs

run: go test ./... -run TestAgentMatches -count=1

```

No `OPENAI_API_KEY`, no Anthropic key, no network. The fixture is the

contract. If your test suite fails on a PR that should be a no-op,

replay points at the exact `seq` where today's behavior diverged from

the day the fixture was recorded.

## Limits [#limits]

Replay verifies *behavior under the same wiring*. It does not catch:

* Bugs in code paths your fixture didn't exercise. Coverage still

matters; pick fixtures deliberately.

* Provider regressions on the *real* network. That's still on you to

monitor with the metrics from [Operations](/docs/operations).

* Issues that only manifest under concurrency or scale. Replay is

single-run.

What it does catch is the class of change most agent codebases miss

entirely: *"my code looks the same and the model still works, but

the agent quietly does something different now."*

## Where to next [#where-to-next]

- [Concepts](/docs/concepts): The determinism contract behind why this works.

- [Reference · replay](/docs/reference#replay): Verify, Stream, Divergence, ErrNonDeterminism.

# HTTP daemon (/docs/starlingd)

`package starlingd` turns your own `*starling.Agent` wiring into a

small private HTTP service. Use it when another app needs to enqueue

runs, stream progress, read recorded events, scrape Prometheus

metrics, and open the inspector next to the API.

`starlingd` is intentionally not a distributed job system. The current

queue is in-process FIFO. Use one daemon process per queue, and put

durable orchestration above it if you need cross-process failover.

## Minimal binary [#minimal-binary]

```go

package main

import (

"context"

"os"

starling "github.com/jerkeyray/starling"

"github.com/jerkeyray/starling/provider/openai"

"github.com/jerkeyray/starling/starlingd"

)

func buildAgent(ctx context.Context) (*starling.Agent, error) {

prov, err := openai.New(openai.WithAPIKey(os.Getenv("OPENAI_API_KEY")))

if err != nil {

return nil, err

}

return &starling.Agent{

Provider: prov,

Config: starling.Config{Model: "gpt-4o-mini", MaxTurns: 4},

// starlingd overwrites Log and Metrics for every run.

}, nil

}

func main() {

if len(os.Args) > 1 && os.Args[1] == "serve" {

if err := starlingd.Command(buildAgent).Run(os.Args[2:]); err != nil {

panic(err)

}

return

}

panic("usage: my-agent serve [flags] ")

}

```

Run it:

```bash

STARLINGD_TOKEN=secret my-agent serve --addr 127.0.0.1:8080 starling.db

```

## Flags [#flags]

| Flag | Default | Meaning |

| ----------------- | ---------------- | ------------------------------------------------------------------------------------------------------------------------- |

| `--addr` | `127.0.0.1:8080` | HTTP bind address. |

| `--token` | empty | Bearer token. `STARLINGD_TOKEN` is also read. Empty disables auth. |

| `--workers` | `4` | Number of in-process run workers. |

| `--queue` | `100` | Maximum queued runs. |

| `--job-retention` | `5m` | How long terminal in-memory job status is retained after completion, cancellation, or failure. Negative disables cleanup. |

| `--no-inspect` | `false` | Disable the inspector mount. |

## HTTP API [#http-api]

All endpoints require `Authorization: Bearer ` when auth is

configured, including `/metrics` and `/inspect/`.

| Method | Path | Meaning |

| ------ | -------------------------------------------------------- | ------------------------------------------------------------------------- |

| `GET` | `/api/v1/healthz` | Process liveness. |

| `GET` | `/api/v1/readyz` | Event log preflight and queue capacity. |

| `POST` | `/api/v1/runs` | Enqueue a run. Body: `{"goal":"..."}`. Returns `202` with `run_id`. |

| `GET` | `/api/v1/runs?limit=50&offset=0&status=completed&q=text` | List runs from the event log. |

| `GET` | `/api/v1/runs/{runID}` | Run summary/detail. Queued runs return daemon status before events exist. |

| `GET` | `/api/v1/runs/{runID}/events?limit=200&offset=0` | Raw event page. |

| `GET` | `/api/v1/runs/{runID}/stream` | Server-sent events for history plus live updates. |

| `POST` | `/api/v1/runs/{runID}/cancel` | Cancel a queued or running in-process job. |

| `GET` | `/metrics` | Prometheus metrics. |

| `GET` | `/inspect/` | Inspector, when enabled. |

Create and stream a run:

```bash

curl -sS -H "Authorization: Bearer secret" \

-H "Content-Type: application/json" \

-d '{"goal":"summarize this incident"}' \

http://127.0.0.1:8080/api/v1/runs

curl -N -H "Authorization: Bearer secret" \

http://127.0.0.1:8080/api/v1/runs//stream

```

The stream emits SSE events named `status`, `event`, `done`, and

`error`. `event` payloads include `seq`, `kind`, `timestamp`, hash

fields, and the decoded event payload.

The `/events` endpoint pages at the event-log backend when supported.

Default `limit` is 200 and the maximum accepted limit is 1000; use

`offset` to walk long runs without loading the whole event stream.

`daemon_status` is best-effort process memory for recently queued,

running, or terminal jobs. It is retained for `--job-retention`, then

discarded. Use the log-derived `status` field as the authoritative run

state.

## Programmatic server [#programmatic-server]

Use `starlingd.New` when you already own HTTP server setup:

```go

log, _ := eventlog.NewSQLite("starling.db")

inspectorLog, _ := eventlog.NewSQLite("starling.db", eventlog.WithReadOnly())

reg := prometheus.NewRegistry()

metrics := starling.NewMetrics(reg)

srv, err := starlingd.New(starlingd.Config{

Factory: func(context.Context) (*starling.Agent, error) {